- Hot Topics in Mass Spectrometry

- Volume 40

- Issue s9

- Pages: 10–13

What’s Your Workflow? Non-Targeted Analysis of Water Samples

Are generic workflows really needed in environmental analysis? In the end, it is the analyst— not instrumentation or software—that is in charge of what data are obtained, after thoughtfully considering what sample preparation and acquisition method to use.

In recent years, there has been an effort to categorize how emerging contaminants are identified in water samples by liquid chromatography–mass spectrometry (LC–MS). This process is often referred to as a workflow, and it has been the focus of many talks and conference sessions around the world. Many scientists have looked to unify and generalize the workflow, but do we really need a generic workflow to identify a non-target in an environmental sample? Would the scientific method approach (applied to each individual problem to solve) be enough and reliable?

One would not want to write about a topic without defining the key terms that are used frequently in the environmental community. When focusing on non-targeted screening for environmental contaminants in mass spectrometry (MS), we often use terms such as target, non-target, suspect, and unknowns.

There is no doubt about what a target analyte constitutes. It is one that the user is looking for, for which a standard is available (usually in the laboratory), and the properties of which are already known, such as its chromatographic retention time, tandem mass spectrometry (MS/MS) spectra, and chemical structure.

The ambiguous terminology comes when one refers to non-targets. These compounds might be analytes that one is not looking for, but for which standards are available somewhere (they might even be in the laboratory freezer, but one has not made the standards or analyzed them!). They could also be compounds that one is not aware of (such as new pesticides coming onto the market or new pharmaceuticals being prescribed). Once a non-targeted compound is discovered, it becomes a target. Each individual user will have a different approach for this particular process. Mine is usually to analyze the new identified standard (if available), obtain the accurate mass, record the retention time, perform an MS/MS analysis, and understand the fragmentation of the analyte. It is then that it becomes a target.

However, my problem starts when someone talks about suspects. What are those? Some may claim those are analytes that are in a database but for which the user does not have the standard. As a result, the suspect compound has never been analyzed through the methodology; therefore, nothing is known about the compound. But wait, aren’t we getting ahead of ourselves? Databases are part of a workflow scheme, and we have not gotten to it yet. Suspect is the worst ambiguous term to use. Do you really have to start the process of suspecting which compounds will be in your sample? How often do you do that? Again, it depends on your approach, what type of sample you have, and what the end goal is for it. For now, I will leave this term up in the air. We have yet to talk about workflows.

Unknown is next. This term gives me the most trouble. What really is an unknown? How do we definite it? It might be unknown to you, but not to me, or vice versa. Wouldn’t that be the same as non-target, a compound someone knows is out there in the environment, but for which the particular user does not own the standard and has never been analyzed using the method? Once the standard for that compound is analyzed, it becomes a target. To me, the term unknown would be more fitting for a new natural product, such as one discovered in plants, which has never been reported before. It might also be some analyte for which the standard does not exist commercially yet, some new drug that has just been developed, or some pesticide that has just been manufactured and is ready to be released. The environmental community has used this term unknown to describe compounds that are not in databases, and compounds that the user had no idea were out there. To me, that is more of an educated guess. Once you have gathered enough information and done your research, you should know what that particular unknown (to you) is!

In my view, the term unknown is relative to the observer. One might be an experienced analyst and realize some of those non-targets, suspects, or unknowns are in fact targets and very well-known compounds that just happen to be in one’s memory. I myself have memorized hundreds of m/z masses for pesticides and pharmaceuticals and can quickly make an identification. That comes with experience, and everybody’s learning curve is dif- ferent. So, when defining terms, I think one should be aware of experience, knowledge, and the methodology used by the analyst. A beginner analyst might need another set of terms, and maybe a different approach.

This might be a simplistic view, but it has proven to work time after time. To me, there are no suspect compounds or even unknowns; a compound is either a target (you have the standard in your laboratory) or a non-target (you might not even know that the compound exists). Here, I give some examples and refer to some of the work our group has published successfully when referring to non-target analyses.

Instrumentation for Non-Targeted Analysis: High-Resolution

Mass Spectrometry (HRMS)

There is no doubt that the most commonly used and accepted methodology for non-targeted analysis is high-resolution accurate mass spectrometry. It has been approximately 25 years since the development of the first benchtop liquid chromatography (LC)–accurate mass MS instruments using time-of-flight (TOF) or high-resolution MS (HRMS). The instrumentation has evolved tremendously, making these instruments very sensitive, with high resolving power capable of distinguishing between very close isobaric compounds (which have the same nominal mass but different accurate masses). The environmental community has finally accepted these methodologies as unique and capable of identifying an analyte in a sample. Back in the early 2000s, there was still a debate in food and veterinary chemistry of what constituted a positive finding, depending on a point system if using low-resolution mass spectrometry or HRMS (1).

Moreover, there have been attempts to use tandem MS instruments for non-targeted analyses, and those are valid as long as one follows the golden rules of MS and identification. In fact, ion traps were used at the beginning to aid in the identification of non-targeted analytes because of their fragmentation powers and structural elucidation capabilities. Those instruments were often underestimated as to what could be identified in a sample following a few simple approaches.

This overview focuses on using accurate mass MS for the identification of non-targeted compounds.

Workflow and the Identification Process

The term workflow is often used to refer to the process that leads to an identification of an analyte in a sample. To me, the identification process is simple, maybe because I learned to do it before any workflow software was available. But new generations of analysts have lost sight of the “golden rules” of MS, and other processes that are intuitive and follow pattern recognition. But I would still ask, do we really need a workflow?

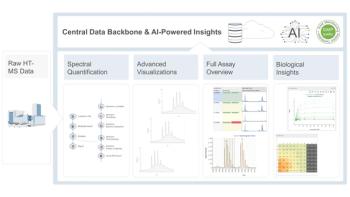

As an author, reviewer, and editor, I have become aware of the increase in papers relating to workflows for non-targeted analyses. There has been an effort to unify this process to make it easier for the analyst to identify as many compounds as possible in a given sample. One important aspect of a workflow is the software. Nowadays, every accurate mass MS instrument manufacturer has its own software package that aids the user in the identification process. However, some of these software programs are not easy to use, have tedious learning curves, and are quite expensive. On top of that, software does not guarantee that you will correctly identify everything that is in a sample. False positives and negatives are the most common drawbacks encountered. In the next subsections, I discuss what I think needs to be considered when identifying non-targets in samples.

Sample Preparation

Sometimes, the most important process in environmental analysis is forgotten: sample preparation. Every sample, every project, and every problem is different. Sample preparation is an important step in environmental analysis, and it will determine which compounds you identify or don’t identify at the end. For example, fire-related compounds that are organic acids need a pH adjustment before the sample is processed through solid-phase extraction (SPE). If that step is not done, then no identification of polycarboxylic acids will be possible (2). Some workflows described in the literature do not take into account the important step of sample preparation.

Acquisition Method

There are myriad instruments and software packages, so where does one start? How about not having a generic workflow? How about developing a unique workflow each time a sample arrives in your laboratory? After giving careful consideration to sample preparation, one has to decide the acquisition method one wants to use. That alone will also determine what we will be able to identify. There are quite a few different acquisition methods depending on instrumentation and manufacturer type. Mainly, there are two methods that I can generalize when using quadrupole HRMS: data-independent acquisition, and data-dependent acquisition. In my view, data-independent acquisition (using a high in-source voltage) has been proven very effective in identifying compounds and their metabolites as a first step (3). Of course, data-dependent acquisition (MS/MS triggered) is also a highly effective approach for unequivocal identification using MS/MS spectral mass, libraries, and chemical structural elucidation.

Formula Generation

Depending on which workflow one follows, at some point there is a step that involves formula generation from a measured accurate mass. Again, it is the user (not the software!) that has to set the elements that are included in the formula generation. A visual inspection of the mass spectra data will reveal if a sulfur atom or halogen has to be included. For pesticide analysis, phosphorus and fluorine are also elements to consider. These are all user-dependent parameters; the analyst has to make the choice and guide the software, not the other way around. Thus, the analyst should be well trained in accurate mass MS analysis. In one study, for example, halogen signals proved to be a key to the identification of a new anti-depressant in water samples (4).

Databases and Libraries

Then, there are the databases. There are hundreds of them, and accurate mass MS data for each individual compound and their fragments can be acquired easily nowadays. There are homemade and commercial databases. Again, the database that is used determines what you find at the end. There have been attempts to have accurate mass spectral libraries as well, but each instrument is different and will give different ions and abundances for each fragment ion, depending on collision energy. Therefore, one has to decide if that is the best approach for a given sample. The advantage of using generic accurate mass databases is that this parameter of accurate mass for the protonated or depronated molecule is universal; it will not depend on the instrument or acquisition method used (5).

Therefore, the approach one follows is highly dependent on the sample type, sample preparation, acquisition method used, software (or lack of) used to extract data, and finally, user interpretation. Can that be unified into one general workflow? I don’t think so. The analyst still has to do the final interpretation. The instrument will give you accurate data (assuming it is well calibrated and performing correctly), and the software will guide you and try to interpret, but only to a point. The final decision is based on the knowledge, data, and experience of the analyst. That is what will prevail at the end.

Golden Rules of Mass Spectrometry

I mentioned above some of the golden rules in MS that have been forgotten or seldom considered. I will comment on a few of them here.

- It might seem obvious, but accurate mass has to be calculated correctly. Not too long ago, there were still some software programs that did not take into account the mass of an electron when calculating accurate mass (6).

- A second rule is the nitrogen rule, which is an easy one to take into account if we only consider even-electron ions for a formula generation. However, there are software programs that will not account for this (7).

- Mass defect has proven to be very useful for the identification of homologue series (such as surfactants) by measuring differences in alkyl chain lengths (8).

- Obvious halogen and sulfur isotopic signals (abundance and intensity) are also highly useful in the structural elucidation and identification of compounds.

- There is the potential for adduct formation with sodium, ammonium, and potassium. The identification of sucralose is an example of considering the formation of a strong sodium adduct in positive ion mode (9).

- Labile analytes fragment easily and do not show a protonated or deprotonated ion; instead, one of the fragments is observed in electrospray. Examples of this are diphenhydramine and bupropion.

- Finally, we cannot forget fragmentation patterns and fragmentation trees, some of which have also been included in advanced software programs. Again, the user has to guide the software to make an unequivocal and ultimate identification.

Conclusion

HRMS instruments are capable of unequivocally identifying many targeted and non-targeted analytes. The approach to follow will always depend on the type of sample, the analyst’s knowledge, the end goal to achieve, and the tools available (software packages). Generic workflows are not necessarily needed because rapidly changing variables and parameters can be used, depending on the need of each individual problem and user. Trying to unify all these complex pathways in a single workflow or identification system will never work. In the end, it is the analyst who is in charge of the data obtained from the instrument. The instrument produces the data, and the software makes an interpretation, but the analyst has to guide the whole process for successful analysis.

References

(1) A.A.M. Stolker, E. Dijkman, W. Niesing, and E.A. Hogendoorn, ACS Symp. Ser. 850, 32–49 (2003). DOI:

(2) I. Ferrer, E.M. Thurman, J.A. Zweigenbaum, S.F. Murphy, J.P. Webster, and F.L. Rosario-Ortiz, Sci. Total Environ. 770, 144661 (2021). DOI:

(3) I. Ferrer and E.M. Thurman, J. Chromatogr. A 1259, 148–157 (2012). DOI:

(4) I. Ferrer and E.M. Thurman, Anal. Chem. 82, 8161–8168 (2010). DOI:

(5) E.M. Thurman, I. Ferrer, O. Malato, and A.R. Fernández-Alba, Food Addit. Contam. 23(11), 1169–1178 (2006). DOI:

(6) I. Ferrer and E.M. Thurman, Rapid Commun. Mass Spectrom. 21(15), 2538–2539 (2007). DOI:

(7) E.M. Thurman, I. Ferrer, O.J. Pozo, J.V. Sancho, and F. Hernandez, Rapid Commun. Mass Spectrom. 21(23), 3855–3868 (2007). DOI:

(8) E.M. Thurman and I. Ferrer, Compr. Anal. Chem. 79, 125–145 (2018). DOI:

(9) I. Ferrer, J.A. Zweigenbaum, and E.M. Thurman, Anal. Chem. 85(20), 9581–9587 (2013). DOI:

Imma Ferrer is with the University of Colorado, in Boulder, Colorado. Direct correspondence to:

Articles in this issue

Newsletter

Join the global community of analytical scientists who trust LCGC for insights on the latest techniques, trends, and expert solutions in chromatography.